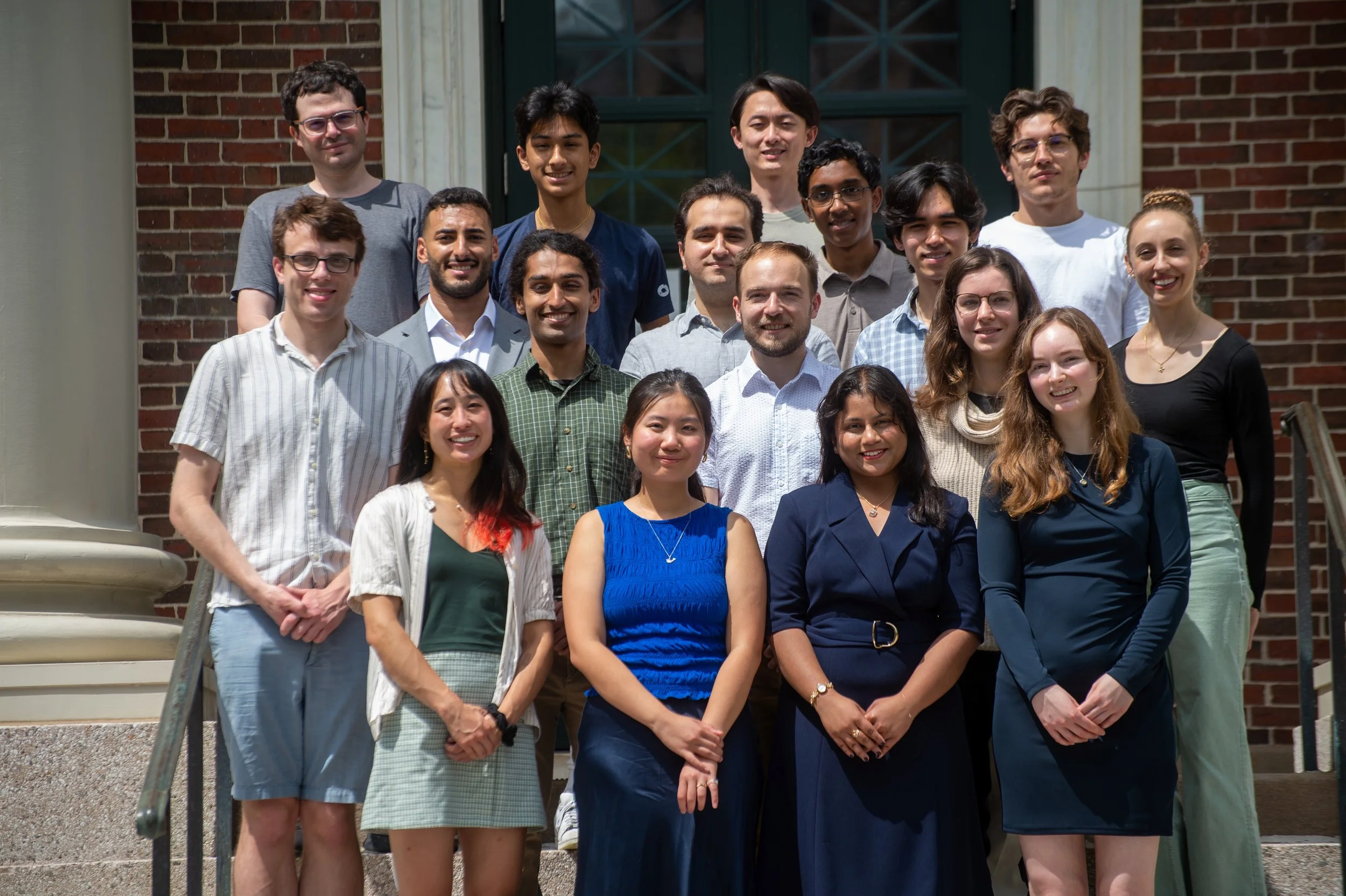

CBAI Summer Research Fellowship in AI Safety ‘26

The Cambridge Boston Alignment Initiative Summer Research Fellowship in AI Safety is an intensive, fully-funded, nine-week research program hosted in Cambridge, Massachusetts, from June 8 to August 10.

It is designed to support talented researchers aiming to advance their careers in AI safety, covering interpretability, multi-agent safety, formal verification, risk management frameworks, and other technical and governance domains. Fellows work closely with established mentors and in-house research managers, participate in weekly workshops and speaker events, and gain invaluable research experience and networking opportunities within the vibrant AI safety community at institutions like Harvard, MIT, Northeastern, Boston, and leading AI safety organizations.

Our inaugural fellowship cohort members have joined Goodfire and Redwood, established their own research group, been accepted into NeurIPS and ICLR, and shared their reports with policymakers in DC.

We host a speaker event series under the fellowship program.

Some speakers in the previous cohort were:

-

Joe Carlsmith

Anthropic

-

Ekdeep Singh Lubana

Goodfire

-

Stewy Slocum

xAI

-

Max Nadeau

Coefficient Giving

What We Offer

-

$10,000 over the nine-week fellowship.

-

For participants coming from outside the Boston Metropolitan Area, we will arrange housing for the entire program through Harvard dorms and Airbnb.

-

Free weekday lunches and dinners, plus snacks, coffee, and beverages.

-

24/7 office access in Harvard Square; a few minutes from Harvard Yard.

-

Weekly individual mentorship (1–2 hrs/week) from researchers at renowned institutions such as Harvard, MIT, Northeastern, Boston University, Goodfire, AVERI, Institute for Progress, and others.

-

Dedicated in-house research managers providing high-touch research support to strengthen your research skills, clarify the research direction, and contribute to your career trajectory in AI safety research.

-

Weekly speaker event series with renowned researchers in the field, and events, workshops, and socials with the AI safety research community in the city.

-

We will provide compute support for up to $10,000 to each fellow in the form of API credits and on-demand GPUs, as well as conference support, should you submit a paper to conferences or workshops.

-

For those interested in continuing their research, we offer an extension program up to 6 months.

Summer 2026 Mentors

-

Andreea Bobu

Assistant Professor, MIT CSAIL

-

Deric Cheng

Director of Research, Windfall Trust

-

Sara Fish

PhD Candidate, Harvard University

-

Dylan Hadfield-Menell

Associate Professor of EECS, MIT CSAIL

-

Shayne Longpre

Lead, Data Provenance Initiative

-

Ekdeep Singh Lubana

Member of Technical Staff, Goodfire

-

Jayson Lynch

Head of Algorithms, MIT FutureTech

-

Sean McGregor

Co-Founder, AVERI; Founder, AI Incident Database

-

Aaron Mueller

Assistant Professor of Computer Science, Boston University

-

Simon Mylius

Researcher, MIT FutureTech

-

Jonathan Rosenfeld

Head of AI, MIT FutureTech

-

Alexander Saeri

Director, MIT AI Risk Initiative

-

Gabriele Sarti

Postdoctoral Researcher, Northeastern University

-

Ben Schifman

Senior Technology Fellow, Institute for Progress

-

Natalie Shapira

Postdoctoral Researcher, Northeastern University

-

Weiyan Shi

Assistant Professor of Computer Science, Northeastern University

-

Chandan Singh

Senior Researcher, Microsoft Research

-

Peter Slattery

Lead, MIT AI Risk Repository; Researcher, MIT FutureTech

-

Hidenori Tanaka

Group Leader, Physics of Intelligence Program at Harvard University

-

Max Tegmark

Professor of Physics, MIT

-

Philip Tomei

Research Director, AI Objectives Institute

-

Kevin Wei

Adjunct Staff, RAND

Who Should Apply?

We welcome applications from anyone deeply committed to advancing the safety and responsible governance of artificial intelligence. Ideal candidates include:

Undergraduate, Master's, and PhD students — and Postdocs — looking to explore or deepen their engagement in AI safety research.

Recent graduates who are aiming to transition into technical or governance AI safety research.

Individuals who are passionate about addressing the risks associated with advanced AI systems.

We think the most promising undergraduates are severely neglected in the field and can achieve great things. This summer, we will put a heavy emphasis on undergraduate candidates, as some mentors will have undergraduate quotas, and we encourage undergraduates to apply.

Eligibility: We accept OPT & CPT if you are an international student in the US. But we are unable to sponsor visas for this program.

Referral: If you refer a successful fellow to us, you can get a $100 Amazon gift card!

Note: Participants must be 18 years or older to apply for this fellowship.

Application Process

Our application process consists of four steps:

1.

General application form

2.

15-minute interview with CBAI

3.

Mentor-specific question, test task, or code screening (if applicable)

4.

An interview with the mentor

We will review applications on a rolling basis.

Please apply at your earliest convenience.

Our Research Tracks

-

Research focused on reducing catastrophic risks from advanced AI, including alignment strategies, interpretability, robustness, formal verification, multi-agent safety, and scalable oversight.

-

Research and analysis aimed at shaping how AI is developed, deployed, and governed, including policy frameworks, regulatory approaches, international coordination, and institutional mechanisms for managing risks from powerful AI systems.

-

Research at the intersection of technical AI safety and policy, including compute governance, model evaluations, standards development, and institutional mechanisms for ensuring advanced AI systems are safe and beneficial.

-

Research on the economic causes and consequences of advanced AI development, spanning empirical and modeling approaches to growth, productivity, and labor market dynamics, as well as policy questions around industrial strategy, market concentration, and the political economy of AI governance.

For more information and your questions, please reach out to emre@cbai.ai

Frequently Asked Questions

-

Unfortunately, no. Per the US laws, we cannot have participants with no work permit join our fellowship program. If you don’t have a work permit (e.g., permanent resident, H-1B visa holder, or a student with OPT/CPT), we cannot have you in the program.

-

Your day will typically begin at our co-working space located in Harvard Square, where you’ll dive into your research project. Around lunchtime, you will attend the weekly speaker event series with virtual or in-person guests. Afternoons usually involve dedicated deep-work sessions focused on your research projects. In the evenings, fellows frequently gather for dinner, followed by various group activities, ranging from paper reading groups to board game nights.

Throughout the week, you'll also have scheduled one-on-one check-ins with your research manager, members of the CBAI team, and coffee chats with other fellows, as well as numerous opportunities to engage with other fellowship events.

-

Fellows are expected to produce written outputs suitable for sharing publicly. These could be in the form of blog posts, interactive demos (if applicable), pre-prints, and paper submissions to ICLR, ICML, NeurIPS, and workshops in these conferences.

While there are no rigid guidelines concerning the format or scope of these outputs, we will actively support fellows by identifying relevant conference opportunities, such as NeurIPS, and assisting them in showcasing their research to broader AI safety and academic communities.

We anticipate this fellowship will lead to ongoing mentorship relationships and foster new collaborations among fellows, providing a foundation for continued engagement and future joint projects.

-

We will organize a speaker series with renowned researchers and peer researchers in the Cambridge area and virtual speakers to expose you to frontier research and other relevant research areas that you might be interested in pursuing post-fellowship.

We will also organize a range of activities, including networking events with researchers in the CBAI network and researchers in the area, research workshops, and wargames.

Every week, we will host socials and outings to the city as well as board game nights and other activities.

Additionally, you'll have opportunities to interact regularly with AI safety researchers from institutions such as Harvard and MIT, through attending the co-hosted events with research groups in those universities. Additionally, if you’re working with a mentor in a lab, you’re expected to use their facilities 1-2 times a week to expose yourself to other research and discussions in the group.This summer, CBAI will also organize an AIxBiosecurity Research Fellowship Program, with approximately 45 participants in both programs, creating a dynamic environment with frequent activities & deep discussions.

Through MAIA and AISST, AI safety student groups that are fiscally sponsored and operated by CBAI, we will host networking events and socials for junior researchers.

-

We’ll accept 30 fellows for our AI safety research fellowship program, and 15 fellows for our AIxBiosecurity research fellowship program, which will be released in mid-April.

Although two programs are technically separate, our offices are two minutes from each other and fellows will stay in the same building and attend mostly the same speaker events and socials frequently.

-

While CBAI fiscally sponsors and operates the AI Safety Student Team at Harvard and MIT AI Alignment, we are not officially affiliated with Harvard or MIT.

However, we work closely with research groups and labs at both universities, and others, including Northeastern and Boston, and fellows working with mentors from labs in these universities may receive affiliate cards to get access to the premises.

Note: If you are a student at a university affiliated with BorrowDirect, you are eligible for a library card granting access to the libraries at Harvard and MIT.

-

For those joining us outside the Cambridge-Boston area, we will provide housing through Harvard dorms located a few minutes away from our offices.

If you prefer to arrange your own housing, please contact us; we may be able to provide housing reimbursement.

-

Mentor matching is a key component of our program, ensuring each fellow receives dedicated support and guidance.

However, in rare cases, we might extend a conditional offer to candidates after the interview round. This offer would be conditional upon either receiving an offer from one of the mentors listed on our website or an external mentor that fellowship organizers agree on.

-

Our default expectation is that all fellows participate in person for the full duration of the fellowship.

However, we understand there may be special circumstances, and we are open to evaluating these on a case-by-case basis.

-

Successful applicants typically demonstrate clear alignment with the goals and values of our program: a well-articulated interest in AI safety, strong motivation for pursuing research in this area, and a very clear career plan for the post-fellowship.

To strengthen your application, clearly outline your relevant background, skills, and past experiences, explain specifically why you're interested in this fellowship, and highlight the impact you hope to achieve through your participation, as well as how you will utilize this fellowship for your post-fellowship.

-

Your research manager provides ongoing support with project management and progress tracking, and may offer research guidance depending on their background. Your mentor is an external expert matched to your specific research area, offering specialized domain knowledge, targeted feedback, and substantive input on your research direction.

-

Barring exceptional cases (e.g., someone with a disability that would make attending in-person extremely difficult for them), we will not accept virtual candidates.

We do not allow part-time participation.